Salvador

RF's Swedenborgian

That is not how odds work. Just because we have not been hit in the last 65 million years does not mean that the odds go up.

Existential risk is greater than the odds of a catastrophic asteroid impact, because there are many other events besides this that could cause human extinction.

Existential risk - RationalWiki

Global catastrophic risk - Wikipedia

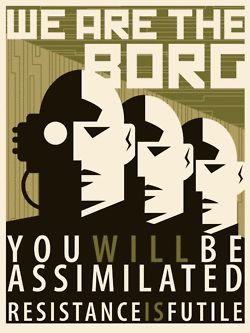

I foresee human intelligence merging together with artificial intelligence by way of artificial intelligence implants into most everybody's mind, which could then be networked together as a collective intelligence being nearly omniscient as well as being nearly omnipresent.

I reckon there might be some human resistance to such a movement, but inevitably such resistance to a collective cyborg intelligence would be futile.

Last edited: